This is post #17 in my IHL series. It picks up where my previous post on data as a military objective ended, and discusses the reasonable-commander standard in cyber operations under different legal traditions. It forward-references the AI-augmented decision-space question that the next post will take up.

Estimated reading time: 28 minutes

I. The puzzle: same cycle, different friction

Cyber operations during armed conflict feed into the same targeting cycle as kinetic strikes, but the legal tests strain at specific points along that cycle. Three friction points keep returning across the operational record: the depth and breadth of foreseeable reverberating effects through interconnected information and communication technology (ICT), dual-use infrastructure that carries military and civilian traffic on the same wires, and the operational tempo of the cyber domain itself. None of these is genuinely new to international humanitarian law (IHL). What is new is the texture of the foreseeability evidence the targeting cell has to put on the table before the commander decides.

Two earlier posts in this series sit in the background. The data-as-object post framed the definitional question that the operational decision in this post is downstream of. The precautions post on Article 57 AP I set out the underlying treaty architecture. This post asks how the targeting cycle and the reasonable-commander standard work together to produce — or fail to produce — a defensible ex ante assessment in cyber operations.

II. The legal toolkit: two traditions, one assessment

Cyber operations do not displace the legal toolkit that governs targeting; they stress it. The apparent gap between the Anglo-American “reasonable-commander” framing and the civil-law-tradition formulations is narrower than the labels suggest, and where it is real, it matters.

The reasonable-commander standard

The standard’s modern operational form crystallised around the International Criminal Tribunal for the former Yugoslavia (ICTY) committee report on the NATO bombing campaign against the Federal Republic of Yugoslavia, which suggested that “the determination of relative values must be that of the ‘reasonable military commander’” (at § 50). Henderson and Reece track the standard’s intellectual genealogy and locate its operational core in a good-faith assessment by the commander — made ex ante, on the information actually available, and across the full range of foreseeable effects (Henderson & Reece, 51 Vand. J. Transnat’l L. 835 (2018), at pp. 841–847).

The U.S. Department of Defense codifies this approach in the Law of War Manual (§ 5.10.2.2, at pp. 252–253), which frames the proportionality decision as a good-faith assessment by the commander based on information reasonably available at the time. The North Atlantic Treaty Organization (NATO) Allied Command Operations Handbook on the Protection of Civilians (2021) extends the same logic into Allied targeting doctrine, expressly directing that the targeting process include legal and engineering considerations and take into account second- and third-order effects on the civilian environment, services and infrastructure (at p. 25).

Hathaway and her co-authors situate this framing within their broader argument about dual-use objects, stressing that the reasonable-commander standard does real analytical work only if the commander is required to take account of foreseeable indirect consequences — precisely the point at which cyber operations through interconnected ICT infrastructure put the standard under strain (Hathaway et al., 134 Yale L.J. 2645 (2025), Part III.B.3, at pp. 2740–2748).

The civil-law-tradition counterparts

Continental European discourse rarely speaks of a “reasonable commander” as such. A doctrinally adjacent formulation appears in the Galić Trial Chamber’s articulation of the proportionality test, which asks whether “a reasonably well-informed person in the circumstances of the actual perpetrator, making reasonable use of the information available to him or her, could have expected excessive civilian casualties to result from the attack” (Prosecutor v. Galić, Trial Judgement, IT-98-29-T, 5 December 2003, at § 58). Footnote 109 of the same judgment records that Germany declared on ratifying Additional Protocol I to the Geneva Conventions (AP I) that proportionality decisions must be judged on the basis of information available to the responsible person at the time, and not in hindsight — a position joined by Switzerland, Italy, Belgium, the Netherlands, New Zealand, Spain, Canada and Australia, with no objection raised by any other state party.

The Prlić Trial Chamber later applied a comparable assessment when evaluating the destruction of the Old Bridge of Mostar. Having found the Old Bridge to be indispensable to the front-line combat operations and supply lines of the Army of the Republic of Bosnia and Herzegovina (ABiH) (Prosecutor v. Prlić et al., Trial Judgement, IT-04-74-T, 29 May 2013, Vol. 3, § 1582), the chamber nonetheless held that destruction of the bridge was “disproportionate to the concrete and direct military advantage expected” (ibid., § 1584). The decision generated sharp doctrinal controversy on whether the chamber genuinely engaged with the anticipated military advantage. For our purposes, the relevant point is the assessment frame: an ex ante, information-bounded, foreseeability-driven evaluation indistinguishable in structure from the reasonable-commander standard.

The German Zentrale Dienstvorschrift ZDv A-2141/1 Humanitäres Völkerrecht in bewaffneten Konflikten (in force 20 February 2018) places the same test at the centre of its precautions-in-attack regime. § 416 (at p. 48) requires every “verantwortliche militärische Führerin bzw. verantwortliche militärische Führer” — every responsible female or male military commander — to take the AP I Article 57 precautions before engaging a target, and closes with the express command that the decision to attack must be made on the basis of all information available at the time of acting and is not to be assessed against the actual course of events as later recognised. The manual does not adopt either “reasonable commander” or “verständiger Befehlshaber” as a term of art, but the ex ante test it articulates is functionally identical to the Galić formulation. France, in its 2019 cyber position paper (at pp. 15–16), similarly reaffirms the ex ante perspective and the duty to take all feasible precautions, without adopting a distinct doctrinal label.

What the two traditions share — and where they part

Convergence runs deeper than the vocabulary suggests. Both traditions expressly share two commitments. First, the assessment is ex ante: judged on information available at the time of decision, not by hindsight. Second, the standard is information-bounded: a commander cannot be held to a level of foresight unattainable in the operational circumstances, but must make reasonable use of what is in fact known. Running through both, in my view, is a third assumption that gives the standard its operational bite: a defensible decision is one that can be reconstructed and tested against the standard after the fact, which is to say the documentary record matters even where neither tradition expressly says so.

The genuine points of friction are narrower but real. The first concerns the breadth of foreseeable harm. There is a substantial scholarly literature placing reverberating effects squarely within what a reasonable commander must consider; Henderson and Reece are more cautious, warning against an unbounded conception of foreseeability that would render the standard unworkable in operational practice (at pp. 850–855). The second concerns the institutional posture of the standard. In the criminal-law lens of Galić and Prlić, the standard frames the mens rea (the mental element of the offence) inquiry that a tribunal must apply post hoc; in the doctrinal lens of the DoD Manual and the NATO ACO Handbook, it functions as an operational compliance yardstick that targeting cells must apply in real time. Both functions matter, but a practitioner needs to know which one is in play before invoking the standard.

III. Why cyber strains the standard

The standard holds. What strains is the evidence base it requires.

Reverberating effects through interconnected ICT

Robinson and Nohle’s foundational treatment of reverberating effects in the context of explosive weapons in populated areas establishes the doctrinal baseline that the ex ante assessment must capture foreseeable indirect harms, not only direct blast and fragmentation effects (Robinson & Nohle, 98 IRRC 107 (2016), at pp. 110–116). Their textual reading of the AP I term “may be expected” — which the 1974–77 Diplomatic Conference expressly refused to confine to harms in the immediate vicinity of the military objective (at p. 113) — applies with full force in the cyber domain.

The cyber-specific contour is harder. In a kinetic strike on an electrical substation, the foreseeable second-order effects reach the patients in the intensive-care units that the substation feeds. The chain is short; the topology is mappable. In a cyber operation against the same substation’s control system, the foreseeable second-order effects reach not only those patients but also every system, public and private, that depends on the operator’s grid management software, the vendors that share that software stack, and the customers that rely on those vendors. The “blast radius” is a network topology, not a kilometre radius. The ICRC’s institutional position recognises this: foreseeable indirect effects on the civilian population — including those caused by the disruption of essential services through interconnected systems — must be included in the proportionality assessment (ICRC, 2019, at pp. 6–8).

Geiss and Lahmann anticipated this problem more than a decade ago, observing that distinction in cyberspace is structurally complicated by the fact that civilian and military traffic share the same physical and logical infrastructure to a degree that has no clean kinetic analogue (Geiss & Lahmann, 45 Israel L. Rev. 381 (2012), at pp. 391–397). Mačák and Pircher build on this in their forthcoming chapter, mapping cyber harm across five categories: direct harm to civilian systems and services, the civilianisation of the digital battlefield, non-tangible psychological and economic harm, systemic and cascading risks, and escalation risks (Mačák & Pircher, ECIL Working Paper 2025/1, at pp. 3–5). Three of those categories — direct harm, systemic risks, and the spread of cascading failures — translate directly into the reverberating-effects analysis the reasonable commander must perform.

Dual-use infrastructure and the targeting decision

The data-as-object question I addressed in the previous post sits in the background here. Even when the legal status of the target is settled — say, an enemy military communications path running through a commercial telecommunications operator — the ex ante assessment has to work out what civilian functions ride on the same infrastructure and what feasible alternatives exist. NATO’s Allied Joint Publication AJP-3.20, Allied Joint Doctrine for Cyberspace Operations recognises this directly in section 3.10 (at p. 21), warning that some objects in cyberspace “have both military and civilian uses” and that “if dual-use objects are to be targeted, careful analysis must be carried out to determine if they constitute a lawful military objective”. Schmitt’s Wired Warfare 3.0 applies the same template through two policy proposals — special protection for essential civilian functions and services, and a balancing of negative civilian effects against conflict-related benefits where IHL’s attack rules do not apply — both of which engage the dual-use civilian cyber infrastructure problem directly (Schmitt, 101 IRRC 333 (2019), at pp. 345–353).

This is where the targeting cycle’s documentary discipline becomes indispensable. The reasonable-commander test does not relax in the cyber domain. What changes is the kind of foreseeability evidence the cell must have on the table before the commander signs off — and Section 3 turns to how the cycle generates that evidence.

Tempo and the human-in-the-loop question

Cyber operations move fast. Vulnerabilities can be exploited in milliseconds, and access windows close as defenders patch or notice. Biller’s Year Ahead 2026 piece for the Lieber Institute frames the trajectory bluntly: AI-enabled cyber capabilities will increasingly operate at speeds that exceed those of human operators, and traditional target-specific legal review will struggle to keep up. His third prediction is the relevant one for our framework — the speed of AI-enabled cyber operations will shift legal review away from the moment of employment and toward ex ante governance through system design, training, and testing.

This reframing matters. The reflexive narrative in disarmament discourse treats AI as the cause of the tempo problem and the displacement of human judgement as its consequence. The narrative is half-right at most. Operational tempo that exceeds the unaided human OODA cycle is not new to the battlefield. Naval Close-In Weapon Systems have for decades engaged inbound threats inside human reaction windows, with rule-based automation rather than judgement. The relevant legal question — whether the weapon system’s design ensures compliance with distinction, proportionality and precautions across the full envelope of foreseeable use — has always been answered at the design and review stage, not in the half-second before engagement. AI Decision Support Systems (DSS), properly designed, do not collapse the deliberative space; they expand it, by performing the rapid synthesis of information that the commander needs in order to make a considered judgement on the matters that genuinely require human deliberation.

Dorsey’s concerns are functionally compatible with this critique, though her angle is different. Her recent IRRC piece argues that growing reliance on AI-enabled DSS risks displacing the contextual, qualitative judgement that lawful proportionality assessment requires, and warns against a quantification logic that may “normalise civilian harm” (Dorsey, 107 IRRC No. 930 (2025), at pp. 1043–1044 and 1060, 1064). Her concerns about over-reliance on automated outputs and the erosion of accountability deserve serious engagement. Where I depart from her is on the further inference that the safest answer is to constrain the use of AI in targeting. In my view, the unaided human commander facing cyber-domain tempo without DSS support is exposed to a worse failure mode: an ex ante assessment made on incomplete information because the commander could not synthesise the available evidence in time. That is a humanitarian risk, not a humanitarian safeguard.

The honest answer, on the present record, is that AI is part of the response to a tempo problem that exists independently of it. The reasonable-commander standard is unchanged. What the cyber domain demands is more rigorous ex ante governance and a documentary trail that lets the commander defend the ex ante assessment when the time comes. The targeting cycle, to which I now turn, is the institutional architecture that delivers both.

IV. The targeting cycle as discipline: how the six phases operationalise the standard

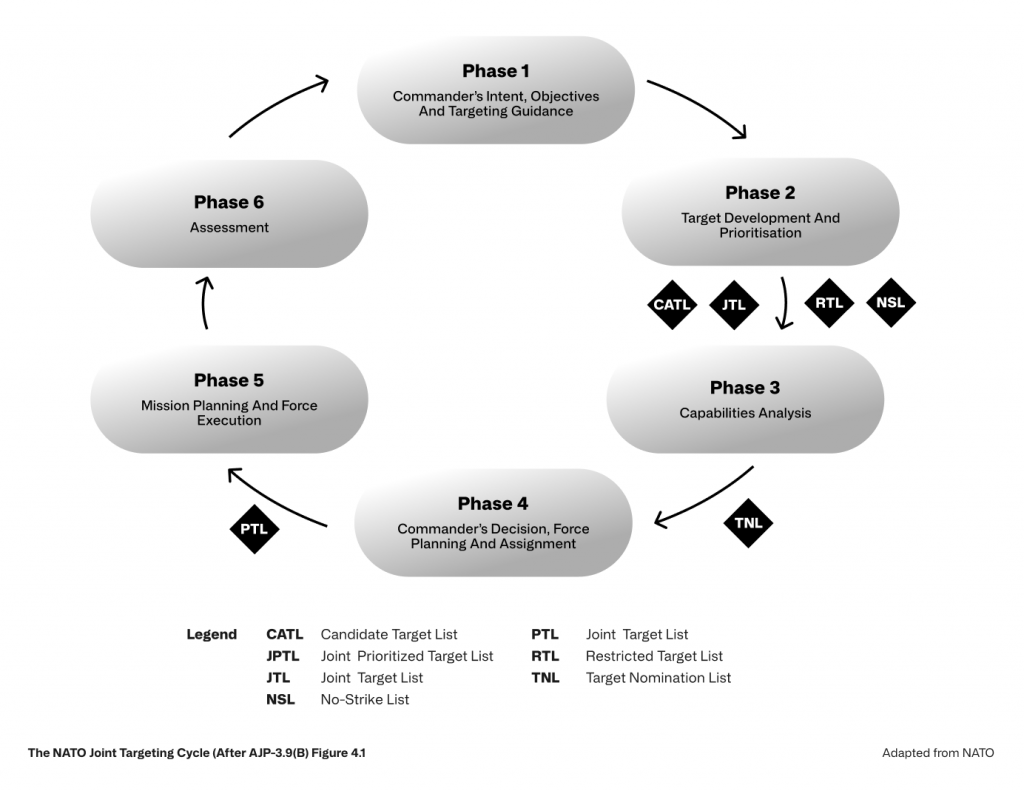

The reasonable-commander standard would be a slogan if practitioners had to reconstruct the ex ante assessment from memory after the fact. The targeting cycle is the institutional architecture that prevents that. NATO’s Allied Joint Publication AJP-3.9(B), Allied Joint Doctrine for Joint Targeting (Edition B, Version 1, November 2021) sets out the six-phase cycle that frames Allied targeting practice (Figure 4.1, at p. 4-5).

That cyber operations follow this cycle is doctrinally settled in NATO. AJP-3.20 § 3.9 (at p. 21) confirms that “the fundamental LOAC principles of military necessity, humanity, proportionality and distinction, apply to COs”, and directs that targeting in cyberspace operations conducted in support of Alliance Operations and Missions adhere to the AJP-3.9 target validation process. NATO doctrine also flags cyberspace operations as inherently sensitive — those reasonably likely to result in loss of life, significant property damage, or adverse foreign policy consequences are routed through the Sensitive Target Approval and Review (STAR) process for elevated review (AJP-3.9(B) § 3.3 footnote 50, at p. 3-5). Cyber operations therefore receive more doctrinal scrutiny under the cycle, not less.

Phases 1 to 3 — where most of the proportionality work happens

Phase 1 sets the end state and the commander’s objectives, framed by the strategic targeting direction the Commander Joint Task Force (Commander JTF) receives from the Supreme Allied Commander Europe (SACEUR) (AJP-3.9(B) §§ 3.1–3.2, at pp. 3-1 to 3-2; the cycle’s Phase 1 label of “Commander’s intent, objectives and targeting guidance” appears at AJP-3.9(B) Figure 4.1, at p. 4-5). For cyber operations, the Phase 1 question is whether a cyber effect is a coherent way to achieve the operational objective at all, given the alternatives available. That question is doctrinal and strategic rather than legal, but it shapes everything downstream.

Phase 2 — target development — is where the bulk of the proportionality and precautions work happens. AJP-3.9(B) § 4.3 (at pp. 4-2 to 4-3) requires the Commander JTF to “ensure compliance with the applicable legal framework, especially IHL/LOAC”, to confirm that targets meet legal and policy requirements, and to ensure conformity with the NATO Protection of Civilians concept. The same three obligations are replicated for Component Commanders at § 5.1.1 (at p. 5-1) — the doctrine therefore imposes the legality check as a continuing duty across operational and tactical levels, not as a one-time JTCB checkpoint. The NATO ACO Handbook on the Protection of Civilians builds on this, expressly directing that targeting decisions take into account potential second- and third-order effects on the civilian environment, services and infrastructure, and noting that such considerations may emerge during target development and must be taken into account during target nomination and prioritisation (at pp. 25, 27, 31).

For cyber operations, what the systems-approach requirement entails in practical operational terms — and what the foreseeability evidence base actually has to look like — sits at the heart of the practitioner question. Section 5 returns to that question with concrete recommendations.

Phase 3 — capabilities analysis — is where weaponeering meets foreseeability. AJP-3.9(B) Figure 2.1 (at p. 2-6) describes Phase 3 as determining “the functional characterization of the target, identify risk factors and likely effects and damage to protected objects and functions”. For kinetic capabilities, the effects characteristics of a given munition against a given target are reasonably well characterised. For cyber capabilities, the corresponding analysis is harder. The behaviour of an exploit chain against a real target is sensitive to the target’s specific configuration, patch level, and integration with adjacent systems. A capability that worked predictably in a test environment may behave differently against a live target, and that uncertainty has to be priced into the foreseeability assessment. Schmitt’s analysis in Wired Warfare 3.0 makes the broader point: the cyber domain’s interconnection and uncertainty pose particular challenges for proportionality and precautions assessments, which his policy proposals seek to address through special protection for essential civilian functions (Schmitt, 101 IRRC 333 (2019), at pp. 345–353).

Phases 4 and 5 — where the ex ante test does its work

Phase 4 — force planning and assignment — translates the validated targets and matched capabilities into a Joint Prioritised Target List (JPTL), with executing authority assigned. Phase 5 — mission planning and force execution — is where the operation is carried out, and where the ex ante test the Galić Trial Chamber articulated does its real work. The chamber asked whether a reasonably well-informed person in the actual circumstances of the perpetrator could, on the available information, have anticipated excessive civilian harm (Galić § 58, as cited in Section 1). Footnote 109 of the same judgment records the German declaration on AP I ratification — that the proportionality decision must be judged on information available to the responsible person at the time of acting, not in hindsight. The same principle is articulated in § 416 of ZDv A-2141/1 at p. 48. For European readers, this is the doctrinal pivot point. The German manual and the Galić chamber are saying the same thing the DoD Manual is saying — the test runs on information available to the commander when the commander decides.

For cyber operations in Phase 5, this matters in a particular way. AJP-3.9(B) § 5.3 sets out the dynamic-targeting Find–Fix–Track–Target–Engage–Exploit–Assess (F2T2E2A) sequence (at pp. 5-2 to 5-3, Figure 5.1 at p. 5-3), which compresses the time between detection and engagement. The ex ante test does not relax under tempo pressure, but the information genuinely available to the commander narrows. The discipline that protects the commander is the work done at Phases 2 and 3 — the foreseeability evidence developed there travels with the target into Phase 5 and frames the commander’s ex ante judgement at the moment of decision.

Phase 6 — where the documentary trail closes

Phase 6 — assessment — measures the extent to which the desired effects were created and recommends the next iteration. AJP-3.9(B) § 4.3 (at p. 4-4) obligates the Commander JTF to ensure that the combat assessment cell evaluates the effectiveness of the targeting effort against the operational objectives. For cyber operations, the assessment phase has a particular weight. Because the effects of a cyber action propagate through interconnected systems, the actual reverberating effects only become visible after the operation. The assessment phase is where the cell tests the foreseeability assumptions made at Phase 2 against the observed reality. Where those assumptions held, the cycle has worked as designed. Where they did not, the next iteration of Phase 2 has to incorporate what the assessment revealed.

The U.S. Civilian Harm Mitigation and Response Action Plan (CHMR-AP, 2022) institutionalises that feedback loop on the U.S. side, with civilian-harm mitigation built into the joint targeting process (Objective 4, at pp. 12–14) and integrated into doctrine, planning, training and exercises across the spectrum of operations (Objective 3, at pp. 9–11). The NATO ACO Handbook on the Protection of Civilians does similar work for Allied targeting. Together, these doctrinal commitments make Phase 6 the institutional learning loop that the reasonable-commander standard depends on.

If the cycle is the discipline, the next question is what happens when the discipline meets reality. Three operational cases test the framework.

V. Testing the framework: three operational cases

Three cases test the framework against the operational record. None is a clean laboratory; each has unresolved attribution, contested impact, or both. Taken together, they show the targeting cycle and the reasonable-commander standard under live conditions.

October 2022 — the Sandworm substation attack

On 10 October 2022, Russian intelligence service Main Directorate (GRU) Unit 74455, the threat actor known as Sandworm, used a “living off the land” technique to operate the Microsoft SCADA management instance at a Ukrainian electrical substation, sending control commands that tripped circuit breakers and caused an unplanned power outage. Two days later the same actor deployed a CaddyWiper variant against the victim’s information-technology environment, plausibly to destroy forensic evidence of the operational-technology attack. Mandiant disclosed the incident in November 2023, describing the intrusion as beginning in or before June 2022 and culminating in two disruptive events on 10 and 12 October 2022. The cyber action coincided with mass missile strikes on critical infrastructure across Ukraine.

Three points matter for the targeting-cycle analysis. First, the operation was prepared over months and timed to coincide with kinetic strikes — there was time at every phase of the cycle for the foreseeability assessment the standard requires. Second, the cyber effect operated against the same civilian-side population that the kinetic missile strikes were already affecting; the compounding effect was foreseeable. Third, Mandiant’s chief analyst publicly observed that “[t]here’s not much evidence that this attack was designed for any practical, military necessity” and that civilians “are typically the ones who suffer from these attacks” (Mandiant analysis as reported by Infosecurity Magazine, 9 November 2023). That is a private-sector analyst’s observation, not a legal finding — but it states precisely the question the reasonable-commander standard exists to answer.

December 2023 — the Kyivstar telecoms attack

On 12 December 2023, Ukraine’s largest telecommunications operator Kyivstar suffered a destructive cyberattack that disrupted mobile and internet services for approximately 24 million mobile subscribers and 1.1 million home internet users (Recorded Future News, 4 January 2024). Service was substantially restored on 20 December. Beyond the direct civilian-communications impact, the attack disrupted air-raid alert systems in the Kyiv and Sumy regions, point-of-sale terminals, automated teller machines and some banking services. The Security Service of Ukraine (SBU) cybersecurity chief Illia Vitiuk attributed the attack to Sandworm, with the Solntsepek persona acting as a hacktivist front, and stated that the attackers had been inside the Kyivstar network since at least May 2023 and had achieved full access by November 2023, before wiping thousands of virtual servers and personal computers. The SBU has stated it is gathering evidence for a potential International Criminal Court prosecution on the basis that the attack on civilian infrastructure satisfies the elements of war crimes (SBU, as reported in The Record, September 2025).

Two analytical points stand out. First, the SBU expressly noted that the Ukrainian armed forces’ own communications were not affected because they used different protocols. The attack did not degrade adversary military communications; it degraded civilian ones. The Phase 2 target-development question — whether the action against Kyivstar offered a definite military advantage as opposed to an effect on civilian morale and infrastructure — is sharply posed by that fact. Second, the disruption of air-raid alert systems is a paradigmatic reverberating effect, exactly the class of foreseeable indirect harm the NATO ACO Handbook on the Protection of Civilians (2021) directs targeting cells to weigh during target development (at p. 25). For European readers, Kyivstar is a useful pivot: the analytical work the Galić “reasonably well-informed person” formulation does in this case is precisely what NATO doctrine calls for ex ante. The civil-law-tradition criminal-law framing and the doctrinal compliance framing converge on the same question.

June 2017 — NotPetya as cautionary tale

NotPetya, deployed on 27 June 2017, propagated initially through a compromised update of M.E.Doc, a widely used Ukrainian tax-accounting software package, and then spread laterally through corporate networks using the EternalBlue exploit and credential-stealing techniques. Although it presented as ransomware, it functioned as a wiper: encrypted data could not be recovered. The malware reached organisations including A.P. Møller-Maersk, Merck & Co., FedEx subsidiary TNT Express, Mondelez International, Saint-Gobain, Reckitt Benckiser, and DLA Piper, and disrupted radiation monitoring at the Chernobyl Nuclear Power Plant. Total damages were placed above USD 10 billion by then-U.S. Homeland Security Adviser Tom Bossert, as reported by Andy Greenberg (Wired, 22 August 2018). Approximately 80 percent of infections occurred inside Ukraine; the remainder spread through interconnected corporate networks worldwide. In October 2020, six GRU Unit 74455 officers were indicted by the U.S. Department of Justice for their roles in the NotPetya operation and other Sandworm activity (U.S. DOJ indictment, as reported in Maritime Executive).

For the targeting-cycle framework, NotPetya is the textbook indiscriminate-attack case. Even on the most generous reconstruction of an ex ante assessment, the absence of a kill switch and the propagation-through-EternalBlue design rendered the actual reach of the operation unbounded at the moment of release. Article 51(4)(c) of AP I prohibits attacks “of a nature to strike military objectives and civilians or civilian objects without distinction”. In targeting-cycle terms, NotPetya was not a Phase 5 execution failure on top of a sound Phase 2 target-development analysis; the absence of any feasible mechanism to constrain propagation means the foreseeability evidence available at Phase 2 should have already been disqualifying. Robinson and Nohle’s framework for the scope of the obligation to take into account the foreseeable reverberating effects of an attack (Robinson & Nohle, 98 IRRC 107 (2016), at pp. 117–134) — which works through causation, the objective foreseeability standard, the reasonable-commander standard, and the temporal, material and geographical scope of foreseeability — applies with even greater force when the propagation mechanism itself is uncontrolled.

The Mačák and Pircher five-category typology of cyber harm (at pp. 3–5) maps cleanly onto the NotPetya footprint. Direct harm to civilian systems (the M.E.Doc client base); systemic and cascading risks (Maersk’s 76 ports, Merck’s pharmaceutical supply chain, the Chernobyl monitoring system); non-tangible economic harm (USD 10 billion in damages); civilianisation of the digital battlefield (the global corporate exposure); and escalation risks (the open question of whether a NATO Article 5 trigger was avoided only by attribution restraint). All five categories present at once. Whatever NotPetya was, it was not a targeting decision a reasonable commander could have defended.

What the three cases show together

The three cases sit at different points along the targeting cycle. The October 2022 substation operation is a Phase 2 / Phase 3 problem: the foreseeability evidence was available, the time to use it existed, and the question is whether the ex ante assessment took it into account or worked around it. Kyivstar is a Phase 2 problem of a different kind: the question is whether the action delivered a concrete and direct military advantage at all, given that Ukrainian military communications were unaffected. NotPetya is a Phase 2 / Phase 5 design failure: the propagation mechanism made the ex ante assessment impossible to perform honestly, and the execution-phase precautions impossible to take.

What the targeting cycle and the reasonable-commander standard offer is not a verdict but a framework for asking the right questions in the right order.

VI. What this means for practitioners

Three audiences read this blog. Each has a different operational stake in the questions worked through above. The recommendations below are written in plain English and grouped by the kind of decision each audience faces.

For the operational lawyer

The targeting cycle is not optional, but it can be done well or badly. The reasonable-commander standard does not relax for cyber operations; what changes is the foreseeability evidence the targeting cell has to put on the table before the commander can sign off. Three concrete steps follow.

First, demand structured red teaming at Phase 2.

Misidentification — including the misinterpretation of a target’s civilian-side dependencies as inert background — is one of the most common ways the ex ante foreseeability assessment goes wrong. The U.S. CHMR-AP Objective 5 (at p. 15) directs combatant commands to develop red teaming policies “with a focus on combating cognitive biases throughout joint targeting processes” and to embed the practice as a continuous function within the targeting cycle. The Joint Staff definition of a red team that the action plan codifies — an independent staff element acting as devil’s advocate and generalised contrarian, supporting plans, operations, and intelligence — gives the operational lawyer something concrete to ask for. In a cyber context, the red team’s job at Phase 2 is to challenge the assumption that the target’s civilian-side dependencies are knowable, bounded, and acceptable. NATO doctrine itself recognises cyber expertise as a board-level advisory function: the Joint Targeting Coordination Board includes a standing Cyber subject-matter expert position (AJP-3.9(B) Figure 4.2, at p. 4-7).

Second, insist on the foreseeability evidence base.

In my view, the foreseeability evidence the cell needs to produce at Phase 2 of a cyber targeting decision has three components: a network-topology map of the target environment that identifies the systems and connections in scope; a dependency graph showing which civilian functions ride on the same infrastructure; and a model of the foreseeable cascading effects of the planned action across that infrastructure. These artefacts are not named in AJP-3.9(B), and I do not claim they are. They are my practitioner inference about what the systems-approach requirement entails when the target is cyber. A reasonable commander cannot make the ex ante foreseeability judgement the standard requires without something resembling these three artefacts on the table, and the lawyer in the cell cannot advise without them either.

Third, treat the documentary trail as the standard’s load-bearing element.

The reasonable-commander standard is testable in retrospect only if the documentary record is good enough to reconstruct the ex ante assessment. CHMR-AP Objective 6 (at p. 17) directs the U.S. military to develop “an enterprise-wide, comprehensive database for civilian harm operational reporting and data management” so that lessons learned can travel from one operation to the next. For the operational lawyer this is the practical question of whether the cell can show, six months or six years later, what the commander knew, when the commander knew it, and what the commander reasonably did with what was known. If the answer to any of those three questions is “we cannot reconstruct that”, the standard will not protect the commander when an inquiry or tribunal comes calling.

For the European practitioner

The doctrinal toolkit is in better shape than the institutional commitment. Galić § 58, the German Statement of Understanding on AP I, ZDv A-2141/1 § 416, and the NATO ACO Handbook on Protection of Civilians all converge on the same ex ante test. What is patchier is the institutional infrastructure that lets armed forces actually do this work at scale.

CHMR-AP Objective 7 (at p. 20) sets out a framework most European armed forces have not yet built: dedicated Civilian Harm Assessment and Investigation Coordinators at combatant commands, standing Civilian Harm Assessment Cells (CHACs) for use during operations, and Department-wide standardised assessment procedures. CHMR-AP Objective 11 (at p. 33) goes further, allocating dedicated full-time positions across Civilian Harm Mitigation and Response Officer (CHMRO) functions, Ally and Partner CHMR Officer (A&P-CHMRO) functions, Civilian Environment Teams, Red Teams, and CHACs — eleven full-time positions at U.S. European Command alone. Whatever one thinks of U.S. operational practice, this is the doctrinal-implementation gap European audiences should track most closely. Armed forces that articulate the ex ante test in their manuals but do not staff the cells that operationalise it have a doctrinal commitment without an institutional spine.

The European practitioner also has the comparative advantage of working in a legal culture that frames the assessment in terms a tribunal might one day apply. The “reasonably well-informed person” formulation of Galić travels naturally into German, French, Italian, and Dutch institutional briefings; the “reasonable commander” formulation can feel imported. That is not a reason to abandon the latter — both formulations do the same work — but it is a reason to be deliberate about which framing serves which audience.

For the policy professional

The state silence the Lieber Year Ahead 2026 piece identifies is the central policy puzzle. States reaffirm IHL’s applicability to cyber operations while remaining notably silent on how distinction, proportionality and precautions translate into operational practice in the cyber domain. That silence is not neutral. It leaves the practitioners — the targeting-cell lawyer, the JAG, the policy adviser — to do work that doctrinal commitment by states should be doing.Three policy implications follow.

Commit to documenting your reasoning for the targeting-cycle

First, the documentary commitments matter. State practice that fails to document its targeting-cycle reasoning leaves no record from which others can learn or against which others can hold it to account. The CHMR-AP framework is one model; the NATO ACO Handbook is another; the German manual is a third. What unites them is the assumption that a defensible decision is a documented one. States that do not commit to that assumption operationally are exposed when their operations are challenged.

Ensure sufficient staffing

Second, the resourcing question is the implementation question. CHMR-AP Objective 11 names specific full-time positions because the institutional infrastructure does not appear without them. Doctrinal commitment without resourcing is not commitment. Policy professionals working in defence ministries and at NATO Headquarters should be alert to this gap in their own institutions.

AI is not the culprit

Third, the AI tempo argument runs the wrong way around in much of the disarmament discourse. The risk that AI Decision Support Systems collapse the deliberative space available to commanders is real but secondary; the more immediate humanitarian risk is the absence of structured tools to synthesise foreseeability evidence at the speed cyber operations now require. Properly designed AI DSS expand the deliberative space rather than collapsing it, and institutional mechanisms like the CHMR-AP red teaming framework are part of how that space gets used well. Policy professionals should be more sceptical of arguments that frame AI as the cause of the tempo problem and more attentive to arguments that ask how it could be part of the response. The next post in this series turns to that question directly.

VII. Where this leaves us

The targeting cycle holds. The reasonable-commander standard holds. What strains is the foreseeability evidence at Phases 2 and 3 of the cycle, and the institutional commitment that lets the cycle actually deliver on the standard’s promise. The two legal traditions — the Anglo-American “reasonable commander” and the civil-law-tradition “reasonably well-informed person” — converge on the same ex ante test. What divides them is mostly institutional posture and vocabulary, not substance.

Three operational cases test the framework. The October 2022 Sandworm substation operation is a Phase 2 / Phase 3 problem, prepared with time to spare and still failing the foreseeability test. Kyivstar is a Phase 2 problem of a different kind — the question of whether the action delivered any concrete and direct military advantage at all. NotPetya is the textbook indiscriminate-attack case, where the propagation mechanism made the ex ante assessment impossible to perform honestly. None of the three settles a legal question. All three pose the right ones.

The post has touched but not engaged the AI/autonomy thread that runs underneath the tempo question. The next post in this series picks that up directly: the human-in-the-loop question for cyber targeting in an AI-augmented decision space. The disarmament-discourse instinct is to treat AI as the displacement of human judgement and the cause of the tempo problem. The more honest framing is that AI Decision Support Systems are part of the response to a tempo problem that exists independently of them, and that properly designed they expand rather than collapse the deliberative space the reasonable-commander standard depends on. The standard does not relax under tempo pressure; what changes is how the cell builds the evidence base the standard requires. That is the work the next post takes up.